The Coherent Error

On the misunderstanding that passes for knowledge

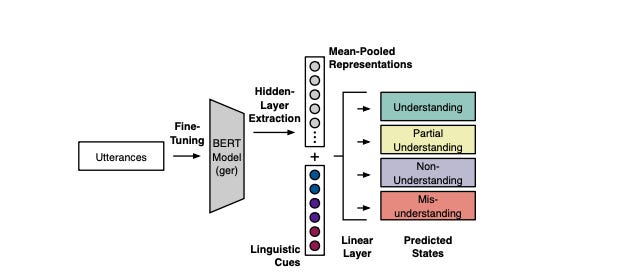

A paper published this month from researchers at Bielefeld and Paderborn universities recorded 21 pairs of people, one explaining a board game to the other, and tried to predict the listener’s state of understanding moment by moment from measurable cues in the conversation. The classifiers they built, including a fine-tuned version of BERT, a language model that reads text bidirectionally to build richer contextual representations, reached around 82 percent accuracy overall.

The state they struggled with most was Misunderstanding. People actively forming incorrect mental models of what was being explained looked, to every cue the researchers could measure, almost exactly like people who were following along correctly.

That finding is small in scope and large in implication. The paper used 21 dyadic conversations (1:1), in a lab setting which the authors acknowledge. There is also a methodological wrinkle: the ground-truth labels came from listeners watching their own footage immediately after the interaction and annotating their states retrospectively. Memory is reconstructive, and a viewer who now understands the full explanation may retroactively mis-label moments from earlier in the conversation. The findings are directionally credible. They give empirical specificity to a problem that sits at the center of the science of learning.

The Wrong Distinction

The science of learning tends to organize itself around the gap between understanding and not understanding. Can the learner do this or not? Does the explanation land or not? The pedagogical response to that binary is reasonably well developed: formative assessment, feedback, retrieval practice, re-teaching.

The more consequential distinction is different. It is not the gap between understanding and not understanding. It is the gap between two kinds of not understanding.

The first kind is what Wang et al. call non-understanding: a learner who knows they are lost. They register the gap, make it visible, and the exchange has a chance to correct itself. This failure mode is tractable. The feedback loop works.

The second kind is misunderstanding: a learner who has built a mental model that feels coherent but is wrong. They do not register a gap because they do not experience one. From the inside, the explanation made sense.

This distinction has deep roots in the conceptual change literature. Chi’s work on misconceptions distinguishes between a knowledge gap, which is an absence that instruction can fill, and a misconception, which is a presence. An incorrect mental model already occupies the space where a correct one should go. Chi argues that misconceptions are ontological miscategorizations: learners assign a concept to the wrong category of thing, which generates explanations that have internal logic but rest on a false foundation.

Crucially, this is not a failure of the learner. Misconceptions are a natural and predictable output of how human learning works. Every learner builds mental models from prior experience, and those models are often wrong in systematic, predictable ways across entire populations. The same misconceptions appear reliably in the same domains, replicated across decades of research, because they are products of how minds generalize from available experience, not products of individual carelessness or inability.

The practical difference for instruction is significant. A learner who does not know what causes the seasons can be taught. A learner who believes summer is warmer because the earth is closer to the sun already has an answer. That answer is wrong, but it is internally consistent, it connects to other things they believe about heat and distance, and it makes predictions that feel confirming in many contexts. That learner does not need to be filled in. They need their existing model shown to be wrong, which is a different and harder instructional problem.

Where Prior Knowledge Comes In

The critical question is: why do some learners misunderstand while others simply do not know? The answer from cognitive science points to prior knowledge, which is the same variable that determines whether instruction works at all.

When learners misunderstand, it is not from a blank slate. It happens because they bring existing mental models to new content and apply those models in the moment of explanation. When the existing model is approximately right, it acts as scaffolding and produces understanding. When it is wrong in a systematic way, it acts as a filter and produces misunderstanding. The explanation is the same. What changes is what the listener brings to it.

This is why Willingham argues that background knowledge is not supplementary to comprehension but constitutive of it. Prior knowledge is not preparation for learning. It is the mechanism by which explanation either lands or misfires. A learner with strong and accurate prior knowledge in a domain processes a new explanation against that foundation and integrates it correctly. A learner with weak or inaccurate prior knowledge processes the same explanation against a different foundation and may produce a coherent-feeling but wrong result.

The Wang et al. paper supports this indirectly through its surprisal finding. Denser, more informationally rich language from the explainer correlated with listeners reporting they understood, not with confusion. Sweller’s cognitive load framework helps explain why: there are three types of cognitive load. Extraneous load comes from poor instructional design and should be minimized. Intrinsic load comes from the complexity of the material itself. Germane load is the effortful processing that actually builds schemas. High surprisal is not extraneous load. It is closer to intrinsic or germane load, the kind that, for a listener with sufficient prior knowledge, drives deeper processing and more durable learning. Tsipidi et al. (2024) found a related result: predictable language may allow attention to drift because it demands less processing. Challenge and comprehension are not opposites. But the challenge has to be tractable, and what makes it tractable is prior knowledge.

The implication for instruction is that building accurate prior knowledge cumulatively is the precondition for explanation working as intended. Where prior knowledge is absent, new content may not land. Where prior knowledge is wrong, new content may land incorrectly and consolidate an error. Instruction that skips the diagnostic step, that assumes a foundation without checking whether it is there and accurate, is working blind.

Why It Is Hard to Detect

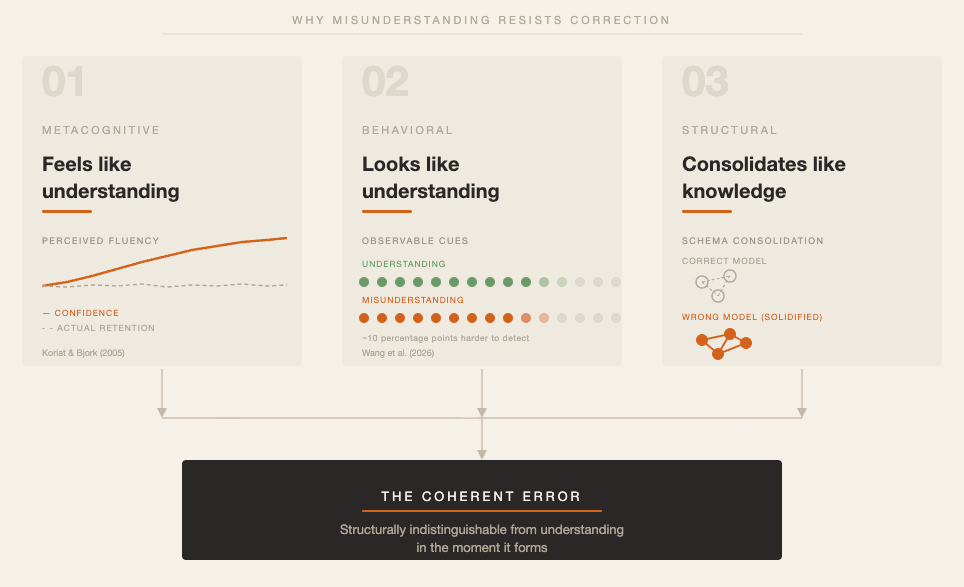

Misunderstanding has three properties that make it particularly resistant to correction, and all three are grounded in research.

The first is metacognitive: misunderstanding feels like understanding. Koriat and Bjork (2005) showed that judgments of learning are driven by the fluency of processing rather than by actual retention. When information feels easy to take in, learners rate it as well-understood, even when later recall reveals otherwise. Misunderstanding produces exactly this fluency. The wrong model generates smooth processing because it is already there, already connected to other beliefs, already generating predictions. The learner does not experience friction because their model handles the input without conflict. Dunlosky et al. (2013) confirmed in a wide-ranging review that learners systematically overestimate how well they have understood material, particularly when they have used passive methods that generate fluency without requiring retrieval.

The second property is behavioral: misunderstanding looks like understanding. This is what the Wang et al. paper quantifies. The researchers measured gaze variation, information value in the explainer’s speech, and syntactic complexity. All three significantly predicted understanding states overall. But the classifier’s accuracy for Misunderstanding was roughly 10 percentage points below its accuracy for Non-Understanding. A listener who knows they are lost tends to show it in some measurable way. A listener who has misunderstood does not. The behavioral residue of misunderstanding and the behavioral residue of understanding are, to a sophisticated measurement instrument, nearly indistinguishable.

The third property is structural: misunderstanding consolidates like knowledge. Willingham’s formulation in Why Don’t Students Like School? captures the mechanism: “memory is the residue of thought.” What gets encoded is not what the teacher said. It is what the learner was thinking. A learner processing an explanation through a wrong prior model is thinking their way through that model, making it more accessible and more confident with each use. The misunderstanding is not a gap waiting to be filled. It is filled, with the wrong content, and reinforced by the act of thinking.

Chi, Slotta, and de Leeuw (1994) documented this in science learning: learners categorize scientific concepts into the wrong ontological type and this mismatch generates explanations with internal logic built on a false foundation. A learner who treats electrical current as a substance rather than a rate will solve certain problems correctly and others wrongly, with no internal awareness of where the model breaks down.

What Instruction Can Do

None of this is a reason for pessimism. It is a reason for precision. Misconceptions are predictable. The same ones appear, in the same domains, across populations. That predictability is actionable. Instruction designed to anticipate, surface, and address specific wrong models is a different kind of instruction from instruction designed to deliver correct ones.

Dylan Wiliam’s work on formative assessment is built on exactly this principle. In Embedded Formative Assessment, Wiliam argues that assessment only functions formatively when the evidence it generates changes what a teacher or student does next. The instruments he advocates are specifically engineered to surface misunderstanding rather than just confirm correct answers. Hinge questions are designed so that the wrong answer reveals which specific misconception a learner holds, not simply that they got something wrong. Two learners can give the same wrong answer for entirely different reasons, and the instructional response to each is different. Instruments that cannot distinguish between those cases are not generating the evidence teachers need. This is a design problem, not a detection problem. It is solvable through deliberate question construction.

Roediger and Butler (2011) point to retrieval practice as a complementary tool. Asking learners to produce and explain rather than recognize and confirm externalizes the structure of their mental models in ways that passive engagement cannot. A learner who has misunderstood will generate a retrievable representation of their misunderstanding when asked to explain. The words they reach for, the connections they make or fail to make, the approximations they produce toward terms they almost have: these carry the shape of what they actually believe. Production makes the model visible. That visibility is what teachers need to adjust instruction in real time.

This is where the Wang et al. finding connects most directly to practice. The cues the researchers found most informative were derived from speech: what was said, how it was structured, how surprising it was. These properties exist just as readily in what learners say as in what explainers say. Every time a learner speaks in an explanatory interaction, they produce evidence about the structure of their mental model. Language is acoustic before it is textual. Those cues are present in every lesson, every one-on-one exchange, every explanatory conversation. The question is whether teachers and the systems (instructional and platform-based) they use are positioned to attend to them.

What the Paper Opens Up

The Wang et al. paper does not solve the misunderstanding problem. What it does is make part of it measurable: moment-by-moment understanding states can be predicted from behavioral cues, and the cues that carry the most information are linguistic ones derived from what is said and how. That is a useful finding, because what can be measured can be designed against.

The tools the science of learning already provides, diagnostic questioning, retrieval practice, deliberate prior knowledge activation, are all responses to the same underlying problem: misunderstanding is structurally indistinguishable from understanding in the moment it forms. Each of those tools works by forcing production rather than passive reception. Production reveals model structure. It makes the wrong model visible in a way that ambient cues cannot. That is the consistent thread from Wiliam’s hinge questions to Roediger’s testing effect to the Wang et al. finding about linguistic cues.

For teachers, this points toward a practical principle: the more a learner is asked to generate language about what they have just heard, the more evidence is available about whether what they heard became what was intended. Explanation back to the teacher, in the learner’s own words, is not just a pedagogical nicety. It is the most accessible version of what Wang et al.’s classifiers were trying to do with gaze tracking and neural networks. And it requires no instrument beyond what the science already recommends: production that forces a mental model into language, where it can finally be seen.